Test deployed models against manipulation attacks data poisoning and robustness vulnerabilities preventing exploitation. Ensure model resilience remains strong throughout production lifecycle.

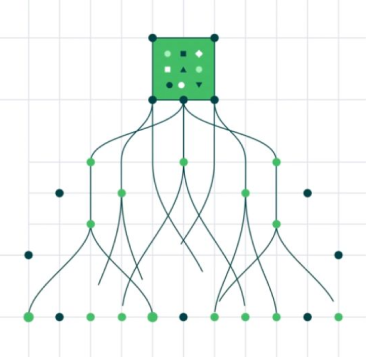

Join Waiting listSystematically generates and tests adversarial examples evaluating whether small intentional perturbations cause model mispredictions

Analyzes incoming training and inference data for patterns indicating intentional poisoning attempts or contamination campaigns

Evaluates model response to input variations identifying vulnerable feature ranges or decision boundaries susceptible to manipulation

Subjects models to extreme valid input ranges edge cases and unusual data combinations verifying stable predictions

Identifies model architecture weaknesses parameter sensitivity issues and decision logic exploitations

Runs realistic attack scenarios against live models documenting exploitation vectors and impact magnitude

Quantifies overall model security posture enabling prioritization of remediation and resource allocation

Reduces exposure to adversarial attacks and manipulation

Increases model stability under malicious or unexpected inputs

Strengthens organizations AI security reputation and customer trust - Significant

Demonstrates proactive security measures to regulators and auditors - 100% evidence-based

Turn operational complexity into measurable performance gains.