Convert raw model predictions and feature weights into clear business narratives that non-technical stakeholders understand. Increase model adoption by 58% through interpretability.

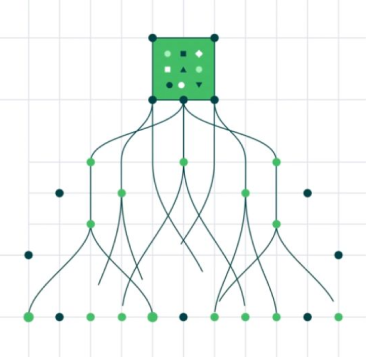

Join Waiting listInterprets complex model outputs feature contributions and decision paths synthesizing interpretable explanations from raw data science outputs

Converts numeric feature weights SHAP values and statistical metrics into plain-language business impact narratives

Tailors explanation language and depth based on audience such as business executives technical analysts compliance reviewers and use case

Ensures explanations are reproducible auditable and consistent across similar predictions enabling regulatory compliance

Readable Summarization - Bridges gap between data science terminology and business language using domain-relevant examples and metaphors

Captures user feedback on explanation clarity and usefulness refining explanation generation and feature importance interpretation

Provides what-if explanations showing how prediction would change with different input values enabling scenario exploration

Increases stakeholder trust and adoption of data science outputs - 58% adoption improvement

Reduces clarification cycles between data scientists and business users - 70% efficiency gain

Improves model defensibility for regulators and auditors through transparent explanations - 100% explainability compliance

Increases acceptance of model recommendations by improving user understanding - 65% improvement

Turn operational complexity into measurable performance gains.