Data Scientists…! We hear about them everywhere around us and there is so much hype around data science, data scientists and the slew of accompanying technologies like Hadoop, Hive, Mahout, Hbase and with keywords like big data, machine learning, information retrieval, search, etc. This makes one wonder what exactly all this ado is about.

A data scientist is a combination of a traditional statistician, data engineer and someone with a good expertise on decision making involving business decisions. He/she is generally both an IT person and a statistician. The work of a data scientist gets more exciting in a startup than in a big company as he/she gets to design and build an entire architecture from scratch which is able to accommodate massively scaleable datasets.

Data scientists are inquisitive: they are expected to do exploratory analysis, and to question existing assumptions. They could help in coming up with efficient and optimized algorithms for the products that an organization is trying to build. Unlike the tasks of a developer whose main focus is on scaling algorithms, a data scientist has to formulate algorithms that could scale and come up with innovative algorithms that take into account and lie at the intersection of the fields of mathematics, statistics, machine learning and data mining which solves the practical problems at hand.

An ideal data scientist has to be a unicorn who can not only understand the mathematical constructs behind the machine learning algorithms but also be able to deal with the implementation aspects of these algorithms at scale so as to build prototypes and run experiments on massive datasets due to which he should be well versed with the map reduce framework of Hadoop which helps run serial algorithms in parallel thus reducing the compute/processing time of most of the machine learning algorithms.

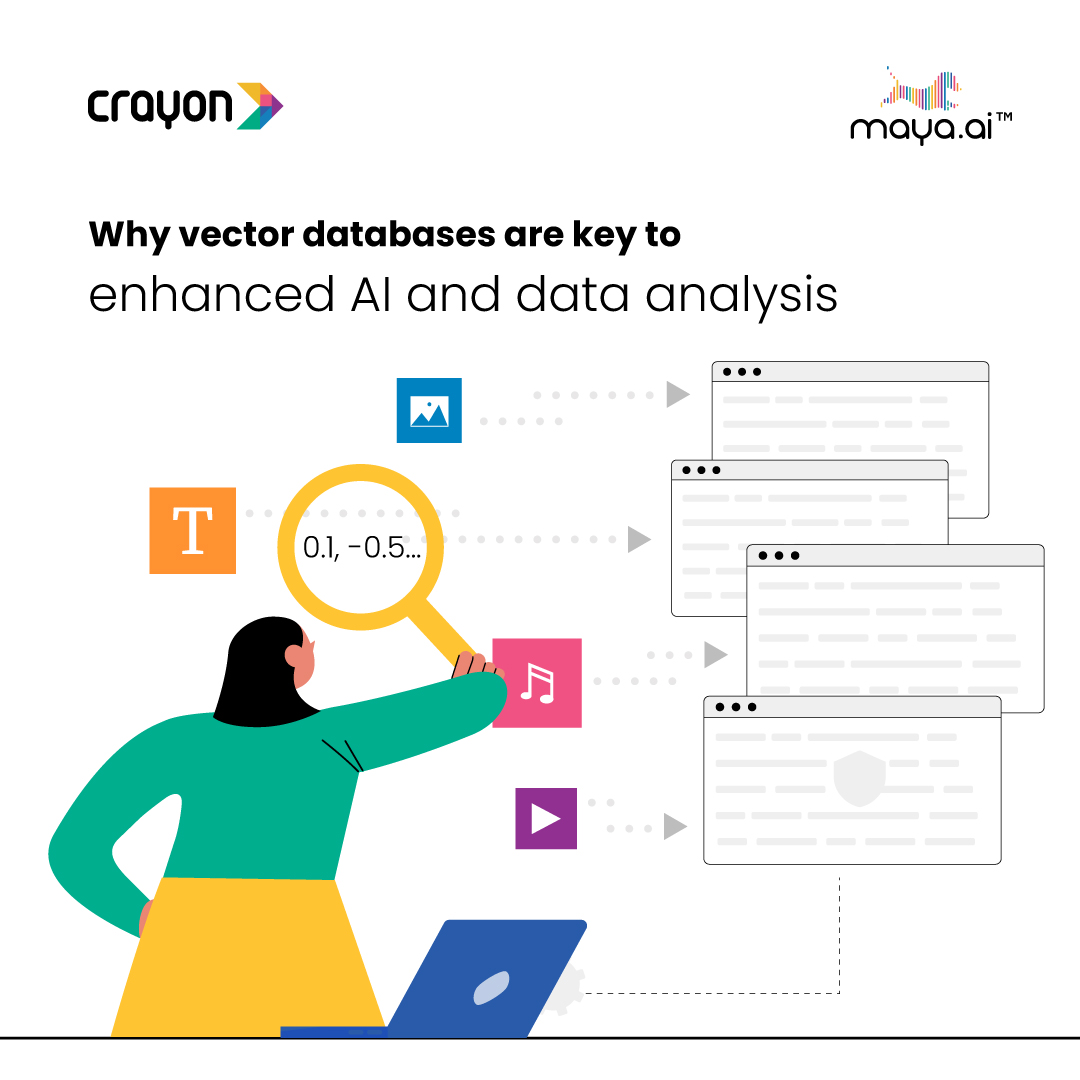

Why vector databases are key to enhanced AI and data analysis

In a...