Saed Syad: With big data, even without having any predictive modeling, we can answer some of those questions by looking into the database. What we call targeting or retargeting is some sort of a database query that makes it faster than you do it in memory. Just having the amount of data we have it is not an easy job. We need some sort of abstraction and predictive modeling. What type of predictive modeling can we use? All the traditional predictive modeling goes through three steps: data, modeling, score. It means I have the data, I build a model and I use the modeling to score.

By having a huge amount of data, the question is, “Should we do the sampling here?” It has been proven that when you have all the data, if you can process it, it is much better than to use a sample. We need some of those techniques to support this amount of data, and there are none.

The majority of those techniques we have like decision tree, neural networks, and support vector machines, are iterative techniques. It means you need to run the same data set many times to optimize parameters. If you pass a couple of millions of records, you should spend 24 hours to get a result. The amount of data we have in big data needs a different approach. It is not just the amount of data, but the speed of generating that data. How about the new data? If I have 100 million records, I go to Google iPredict, I upload it and get my model back after five to ten minutes, what should I do with the 50 variables I get back? If I decide to remove one of those variables, should I go to the website again and build another model?

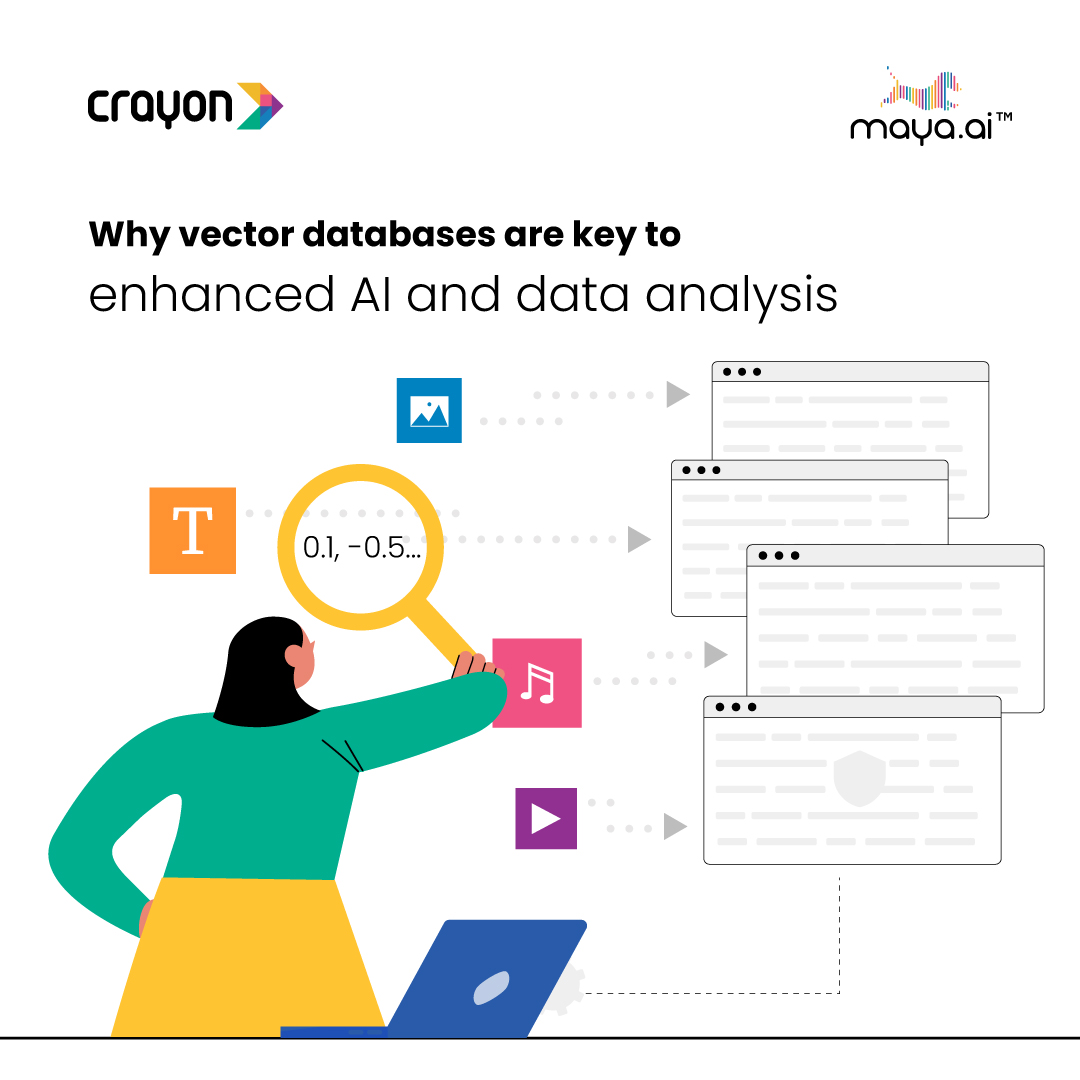

Why vector databases are key to enhanced AI and data analysis

In a...